About Me!

Hi! My name is Hyungwoon, but you can call me Hyung :)

If you are here from the NUS School of Computing (SoC) Open House, please feel free to contact me via email on any further questions!

My undergraduate studies were at the National University of Singapore (NUS), in Computer Science and Psychology, with an emphasis on machine learning and AI, as well as clinical and cognitive psychology.

I have previously been a research assistant at the Augmented Human Lab @ NUS, advised by Prof. Suranga Nanayakkara, where I worked on affective computing and assistive augmentation projects. I have also worked with the Clinical Translational Sciences Lab @ NUS under Prof. Kean Hsu, where I assisted with various behavioral task programming, eye-tracking configurations, and helping with writing research protocols. I have also been heavily involved with CS1101S: Programming Methodology, taught by Prof. Martin Henz. During my candidature, I was active as both a senior undergraduate tutor and a senior developer as part of the leadership team for Source Academy, a web-based learning platform developed and maintained by NUS students for CS1101S.

I am currently working as a research engineer at the Augmented Human Lab. My research work focuses on the development of context-aware, multimodal cognitive augmentation systems that makes use of physiological biosensing and biofeedback in order to augment learning and memory, particularly in the domain of education. My background in machine learning and AI, psychology, and Human-Computer Interaction (HCI) provides me with a unique perspective and skillset to build Human-centred AI systems that align with theories drawn from cognitive sciences, psychology, and interaction design.

I will be joining the Fluid Interfaces group at the MIT Media Lab starting Fall 2026, advised by Prof. Pattie Maes!

Research Interests

Working with Prof. Suranga's AH Lab has been a blessing on my research journey - I was able to work with an interdisciplinary group of people, with experts from various domains such as information systems, interaction design, assistive technology, virtual reality, sensors and haptics, signal processing, machine learning, affective psychology, drone systems, communications and new media, UI/UX design, embedded systems, education pedagogy, clinical psychology... It has been, and continues to be, a great environment for new research ideas to be generated and ideated upon. My love for bridging fields together to generate interdisciplinary insights have so far thrived within the field of HCI, and I suspect that this will be the case for some time.

In particular, the HCI paradigm of augmentation technology has inspired me quite a bit. The idea of designing and developing technology that can assist, enhance, or amplify human capabilities has been the core drive behind technological progress in history, and I am happy to contribute towards augmentation technology that addresses human cognitive capabilities - especially in terms of learning and memory. What I want to work on in the future is, by combining my technical skills with my theoretical knowledge, build impactful and lasting systems that can easily be integrated into our daily lives while extending our cognitive mind and capabilities.

I was always interested in the idea of "learning" from the perspective of a learner and an educator. However, when I started my undergraduate studies in NUS, I was exposed early to machine learning and AI as a field, which was when I realized the potential of looking at "learning" from a mathematical, statistical perspective as well. Many of my earlier research projects (which would later spark my love for research) made use of machine learning and AI, and over time, I realized I like thinking about the problem formulation aspect of machine learning and AI; for instance, during my student program classification project with Prof. Martin Henz, the most exciting part was formulating the student program into a representation and selecting the appropriate machine learning model and architecture, such that the different output categories of student program would align with what we hypothesised as different kinds of thinking processes that the students go through when programming.

Having worked with a diverse range of ML and AI techniques such as regression models, tree-based models and SVMs, deep learning for computer vision (CV) and natural language processing (NLP), reinforcement learning (RL), self-supervised learning (SSL), as well as encoders and transformer architectures (and of course Large Language Models (LLMs)), I now have a better intuition for selecting and constructing the appropriate model architectures for given problems. What I would like to do is to apply this intuition towards building context-aware multimodal AI pipelines that can be incorporated into cognitive augmentation systems.

When I first entered university, my early career aspirations were actually in education and teaching - and I got into research after finding out that you'd likely need a Ph.D. to become a lecturer. What I didn't know was that I would end up enjoying research and completely shift my career directions! I still enjoy teaching quite a bit, and I have served as an undergraduate tutor since my 2nd year in NUS, until graduation. CS1101S in particular was a large part of that enjoyment, having taught it in 4 separate semesters as an avenger (our codename for undergraduate tutor) and later a reflection tutor, a position usually reserved for graduate students.

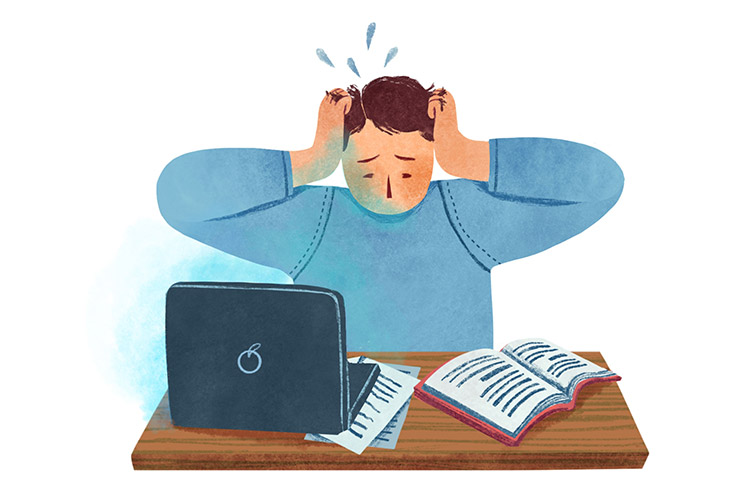

In my 2nd year, I converted my psychology minor (which was initially taken out of pure interest) into a double major, and started looking into clinical psychology specifically. However, after working with Prof. Kean Hsu in his CTS lab, which focused heavily on the impact of cognitive processes and biases on mental health, I realized that what really interested me was that cognitive aspect of psychology. When Prof. Suranga Nanayakkara formed his Centre for Holistic Inquiry into Lifelong Learning (CHILL), I started working with the centre on learning-related projects such as iLEMS and my final year project on acute cognitive stress detection. Over time, I have concretized my interest in cognitive sciences and psychology, with an emphasis towards learning, memory, and mental models - and incorporating this knowledge into building impactful systems that align with the theory.

Signal processing was not my initial focus, but it was always something close to heart by way of music. I had dabbled in digital compositions since secondary school - and I only started looking into it as part of my research interests when I had to work with human biosignal data. Some of my early projects in the AH Lab were on voice acoustics, which introduced me to signal processing as a discipline. However, I credit CS4347: Sound and Music Computing course taught by Prof. Wang Ye as the catalyst that really got me into signal processing, with his intuitive explanations on concepts such as signal decomposition or chord recognition.

Many of my projects have involved signal processing on sensor data; I have worked with sensors such as electroencephalography (EEG) for brain waves, photoplethysmogram (PPG) for blood volume pulse (BVP) which can infer heart rate variability (HRV) and blood oxygen saturation (SpO2), electrodermal activity (EDA) sensors for skin conductance, temperature sensors, and eye trackers. I have also worked with voice acoustics, respiratory rate, facial expressions & gestures, and other behavioral data (e.g. keyboard and mouse use). With (varying degrees of) experience in these signals and sensors, I would really like to make use of this knowledge in building the sensing and biofeedback mechanisms for cognitive augmentation systems.

Non-Research Interests

My interest in music was sparked by video games - to be more specific, nintendo games that I played when I was young. When I first came to Singapore, I barely knew English (I commonly joke to my friends that I only knew five things: “yes”, “no”, “hello”, “goodbye”, “I love apples”. “I love apples” was one of those sentences that you get taught early on in Korean primary schools back then, for no real reason other than its simplicity). What fascinated me was how the music seemed to tell a story of its own, changing and evolving with different environments within the game. In secondary school, I started formally studying music as a subject - and I also took up bagpipes for my extra-curricular activity (a rare chance to learn a rare instrument). Since then, I have delved into digital music composition (initially using a pirated version of FL Studio 12, now with a full licensed version of FL Studio), live performances (I play both piano and bagpipes), and even did my IB extended essay on music - comparing Tchaikovsky's incidental music for Hamlet with Shostakovich's film music for Hamlet. I've also continued as a competitive bagpiper; I started in secondary school in 2013, and I have since been involved with the bagpiping scene. I am currently part of the Singapore Pipe Band Association Exco (for logistics & event organization), and recently have been part of Singapore's Grade 4A Lion City Pipe Band.

Throughout the years, video game music remains one of my lasting hobbies. I love to analyse and enjoy the interplay of narrative and music, and the distinct yet diverse styles brought out by composers from different cultures for different video game genres. Someday, I aim to start a blog about video game music... someday.

Apart from music and video games, I have other hobbies (that might not yet warrant full paragraphs) like origami, digital art, storywriting, cooking, films and cinematography, self-study of history, mythology and philosophy, etc. I partake in each of these every now and then, but all of these diverse experiences do contribute towards my generalized knowledge base and gives me experiences that I can build on (especially history, mythology, and philosophy - these provide wonderful basis for how to reason about real world issues and challenges, and sometimes even complements research directions and interests).

Selected Projects

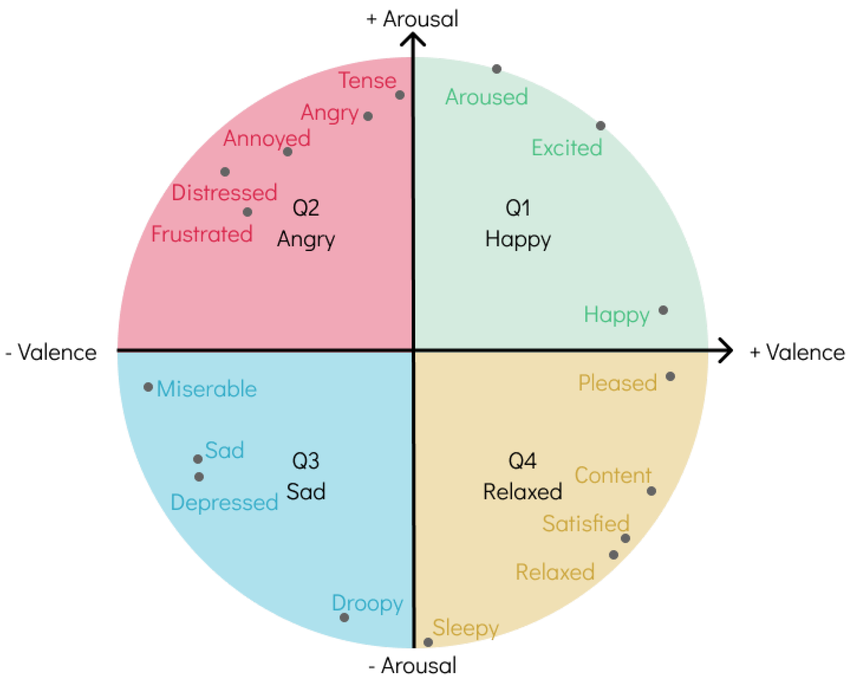

Project in collaboration with an industry partner. This project aims to build affect recognition model with state-of-the-Arts AI architecture, with the aim of integrating the model into wrist-worn / HMD wearable devices for affect-aware recommendation systems. (We have a workshop paper for NeurIPS '25 presented this year!)

Project in collaboration with NUS Centre for Teaching, Learning and Technology (CTLT). We built an AI-driven platform that can detect and flag the use of prohibited applications during open internet examinations. Video recordings and keyboard/mouse data are used by the platform to estimate the likelihood of prohibited applications being used - a surprisingly non-trivial task!

This student research project focused on detection of acute cognitive stress that arises when students are working on mentally demanding tasks, especially when recalling information under pressure. Multimodal machine learning techniques were used alongside collected biosignal data (e.g. HRV, EDA, Temp) to build a multimodal stress classification model. [Report available upon request]

This project was initiated under CHILL @ NUS. The iLEMS system is a web-based platform that integrates both course metadata and student behavioral and cognitive state data collected during course time to analyze and present the learning and engagement levels of students over time, allowing for instructors to have a more holistic view of the classroom. The platform is still a work in progress under CHILL.

Emplity is my startup; we build AI-driven applications for mental health professionals in-training, and we are currently undergoing trials with institutes of higher learning and universities. If this sounds interesting to you as a potential collaborator / client, feel free to reach out!

This student research project focused on classifying student programs through low-level program traces extracted from abstract syntax trees (AST). Both standard machine learning models and LLMs were used to cluster programs based on these traces, with significant results. [Report available upon request]

MarkBind is a command-line tool that can help to generate dynamic websites from simple markdown text. Built and maintained by students of National University of Singapore (NUS). I began work on it as a junior developer in my 3rd year, and continued to work as a senior developer until graduation.

This was part of an ongoing research project to build a navigation algorithm for a robotic guide dog in unseen environments, for the purpose of guiding visually impaired used. This project specifically focused on unseen navigation and stairs climbing.

Source Academy is a web-based platform for interactive learning, designed around the Structure and Interpretation of Computer Programs (SICP) textbook, and used for the CS1101S: Programming Methodology I course. Built and maintained by students of National University of Singapore (NUS). I began work on it as a junior developer in my 2nd year, and continued to work as a senior developer until graduation.

Publications

Stress is a critical determinant of both short-term well-being and long-term health. While wearable sensors have enabled continuous monitoring of stress through physiological signals, existing approaches that rely only on current physiology have shown limited success. Prior work suggests that the previous night's sleep is predictive of stress, yet current methods typically use only coarse sleep summaries (e.g., duration, resting heart rate). In this paper, we argue that fine-grained sleep physiological data can provide richer insights for stress detection. We collect a month-long smartwatch dataset comprising both day-time and night-time physiological signals, including detailed sleep-derived features, and train two models -- XGBoost and a custom multi-modal neural network. Our results provide initial evidence that incorporating fine-grained sleep features significantly improves stress detection, opening up several promising directions for future research.